The long promise: Is AI now opening robotics to the real world?

Arthur Richards

Professor of Robotics and Control, University of Bristol and co-director of the Bristol Robotics Lab

Robotics has always carried a certain promise. For decades we have imagined machines that work alongside people, helping with everyday tasks and making life easier. A fun and often romantic notion driven by popular culture, from Robby the Robot in Forbidden Planet to R2-D2 in Star Wars.

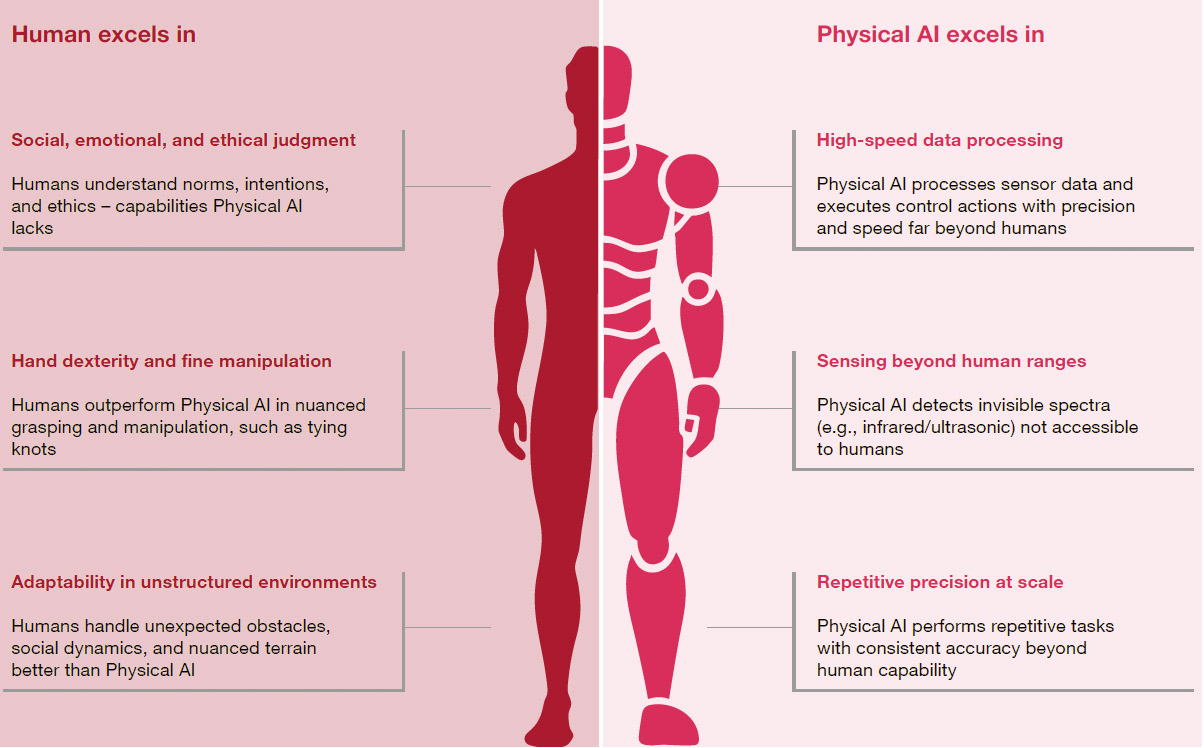

In practice, robotics has developed rather differently. Most robots today operate in tightly controlled environments such as factories and warehouses, where their movements can be carefully defined in advance. These systems are incredibly effective at repetitive, structured tasks, but far less suited to the unpredictable nature of the real world.

What is beginning to change that picture is artificial intelligence.

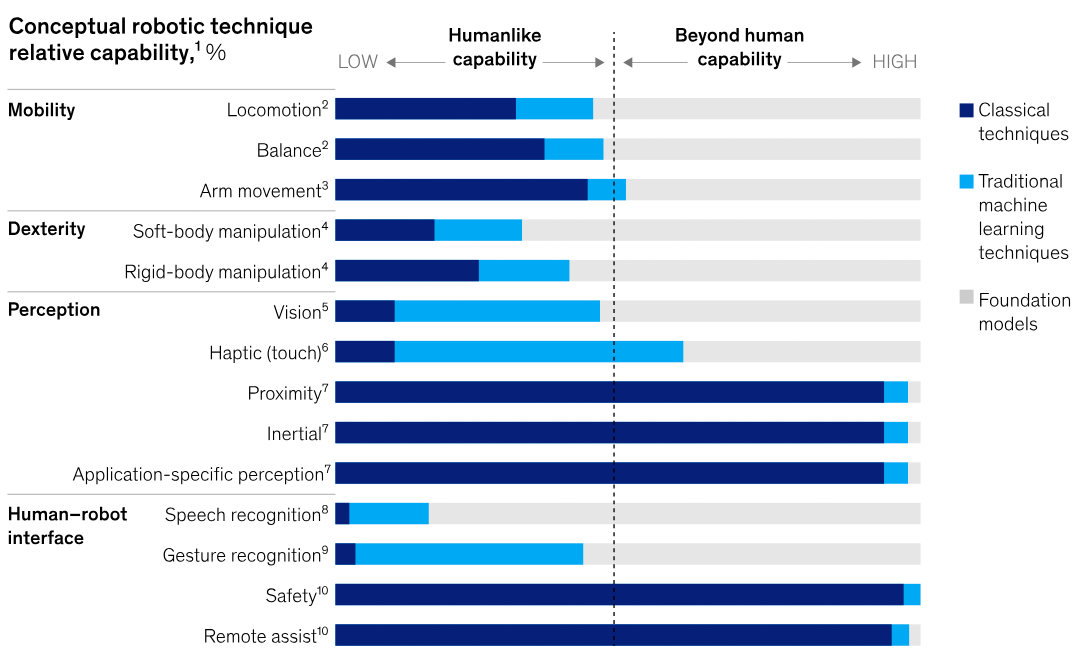

AI is introducing a new way of thinking about how robots are designed and operate. Traditionally, robots have been controlled through carefully written code. Engineers would build a model of the machine and then use that model to decide how it should move, react and interact with objects around it. Robotics required detailed understanding of the machine and a great deal of programming to translate that knowledge into action.

AI is introducing a different approach. Techniques such as reinforcement learning and visual-language-action models allow robots to learn behaviours from data rather than relying entirely on explicit instructions. In simple terms, instead of telling a robot exactly what to do, we can increasingly train it to work things out for itself.

That change is already influencing the way robotics research is carried out. Instead of focusing purely on modelling and programming, researchers now spend much more time thinking about data, where it comes from, how much is needed, and how systems can learn from it. Robotics is becoming more data-driven, and that has implications for how the field develops and for the kinds of skills future roboticists will need.

AI is also opening the door to richer interaction between robots and the physical world. Researchers are developing sensing systems that go beyond simple contact switches to give machines a more nuanced understanding of touch. Rather than detecting only whether something has been grasped, robots can begin to interpret shape, texture and grip, which is essential when handling objects or working alongside people.

At the same time, there are limits to what robots can currently do. Modern systems are becoming very capable at perceiving their environment, recognising objects, identifying people and mapping the world around them. But understanding what those environments mean, or predicting how situations might evolve, remains a more complex challenge.

Even so, if AI can help robots operate more flexibly and safely, it opens the possibility of machines working outside the carefully controlled environments they inhabit today. That would allow robots to move beyond cages and production lines and begin to operate alongside people in far more varied settings.

For those of us working in robotics, that remains one of the most interesting and demanding challenges in the field, and indeed, one that industry is pushing us to solve.

A digital publication from Bristol Innovations, University of Bristol